Blackwell is not just a new GPU. It is a platform shift that bundles compute, memory, interconnect, and rack-scale design into a single planning unit. The flagship GB200 Grace Blackwell pairs Grace CPUs with B200 GPUs and binds them through NVLink and NVSwitch so many accelerators act like one giant device. Nvidia’s own description highlights a unified dual-die GPU with a 10 terabytes per second on-package link, built to run massive models with less orchestration overhead. For solution teams, that means fewer bottlenecks inside the node and more attention on power, cooling, and east-west traffic outside the node.

From delays to volume shipments

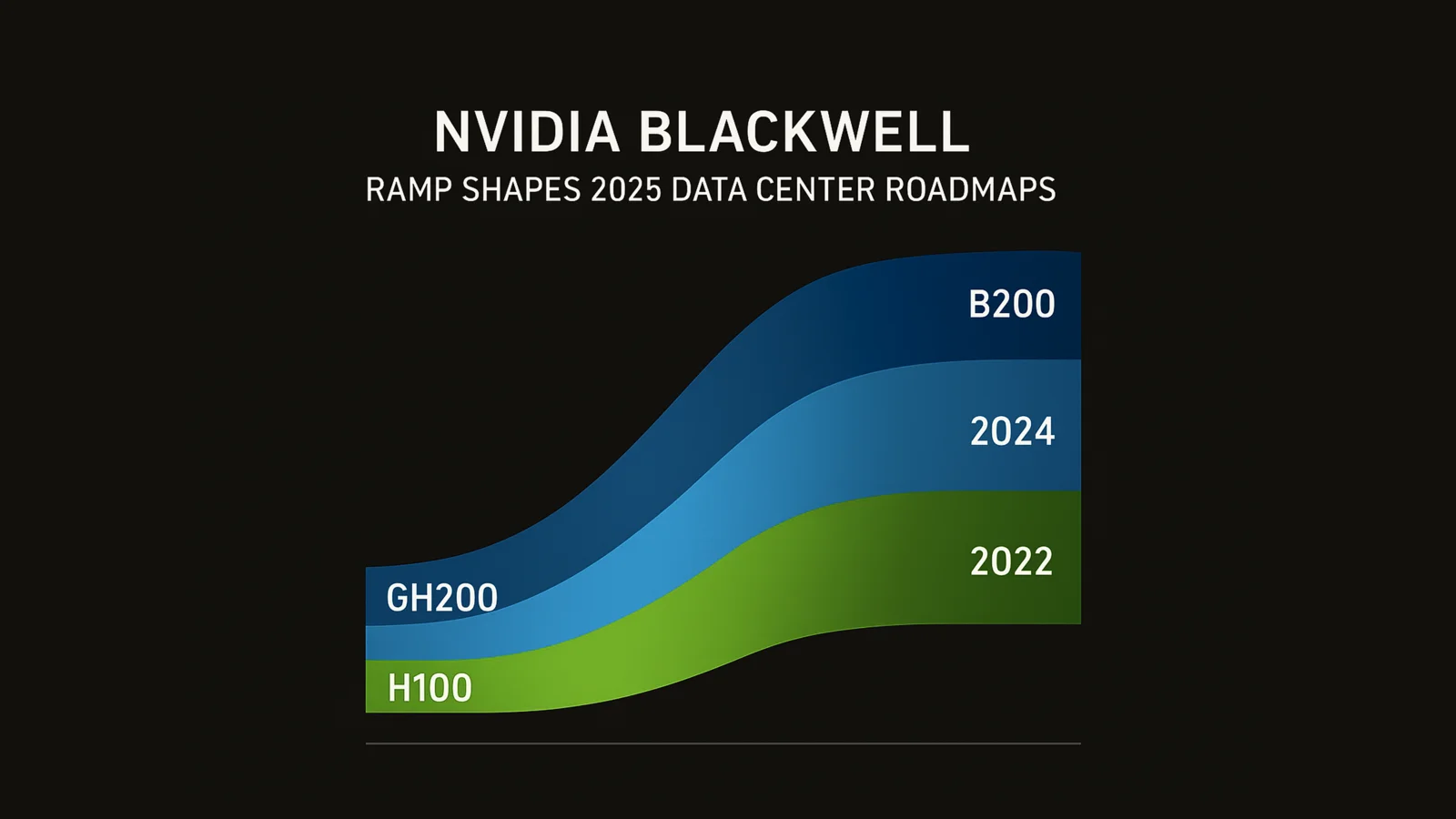

Late 2024 and early 2025 brought reports of thermal and rack design challenges that slowed some Blackwell rollouts. By the February quarter, Nvidia and partners told a different story, describing the generation as the fastest ramp in company history, with the ramp “beginning in earnest” in fiscal Q4 that ended in January 2025. In practice, many buyers extended Hopper while slotting Blackwell for new clusters and greenfield rooms. The lesson is simple. Treat Blackwell adoption as a staged program, not a single forklift upgrade.

Power and cooling realities you must plan for

GB200 NVL72 is the reference point most teams cite in planning. It links 36 Grace CPUs and 72 Blackwell GPUs in a liquid-cooled rack that behaves like a single 72-GPU domain for training and real-time trillion-parameter inference. Third-party configurations list typical rack power near 132 kW, with the vast majority reserved for the GPU complex. If your facilities are still built around 20–30 kW racks and perimeter cooling, budget for rear-door heat exchangers or full liquid loops and rework your branch circuits, PDUs, and UPS topology.

Network choices for scale out and scale across

Blackwell clusters push traffic patterns that stress traditional leaf-spine Ethernet. Two paths are emerging. Inside a site, Blackwell pairs with low-latency fabrics designed for collective operations. Across sites, Nvidia is now promoting Spectrum-XGS, an enhancement that links multiple data centers so they function like a single AI factory, with software steering to keep collective operations effective over distance. If your roadmap includes multi-region training or inference, plan for both scale out inside rooms and scale across between rooms.

Capacity and supply planning in the packaging era

Even as wafer starts climb, the advanced packaging that binds high-bandwidth memory to GPU dies remains the gating factor. Nvidia has said packaging capacity is the long pole, though the industry has roughly quadrupled available capacity in the past two years. The practical takeaway is to treat packaging slots as a resource you plan for months in advance, just like fiber or floor loading. Secure windows with integrators early, validate memory SKUs, and keep a Hopper extension plan that can slide left or right by a quarter.

What to deploy where in 2025

Use Hopper where you need proven availability, broad software support, and a quick path to production. Slot Blackwell for projects that justify rack-scale commitment and liquid cooling, especially where very large models or multi-agent systems need fast intra-cluster collectives. The NVL72 model makes it easier to think in rack units rather than piecemeal nodes, which simplifies scheduling and observability. Teams that partition clusters by model class and latency target will get better utilization than teams that try to make every rack do everything.

A migration path that reduces risk this quarter

Start by validating your priority workloads on a small Blackwell slice with the same driver, NCCL, and container stack you run in production. Prove mixed precision and communication overlap on your real models rather than on benchmarks. Align your scheduler with the NVLink domain boundaries so jobs do not straddle slow links.Confirm that your logging and red-team traces are stable at higher token rates and larger batch sizes. When the results match or exceed your acceptance gates, expand to a full rack, then a room. This avoids the pain of code paths that only surface at scale.

Budget and TCO implications your CFO will ask about

Blackwell’s promise is performance per watt and performance per rack, not just raw speed. You will spend more on liquid infrastructure, power delivery, and networking, but you should close the loop by tracking dollars per trained model and dollars per million tokens served. NVLink domains reduce orchestration tax, which can lower your spend on idle buffers and retries. Spectrum-XGS or similar “scale across” designs can lift utilization by pooling sites, but they also add WAN cost. Put those tradeoffs in one model and review monthly as the ramp matures.

What the China market dynamic means for global buyers

Policy continues to shape chip mix and delivery timing. Nvidia’s China-compliant H-series alternatives have been through multiple revisions and adjustments, with reports of production halts and new variants in development. If your supply chain touches China or adjacent markets, expect periodic shifts in product codes, performance limits, and lead times. Global buyers should hedge with multi-region sourcing and avoid hard dependencies on a single SKU revision.

Facilities checklist that prevents week one surprises

Confirm available chilled water flow and delta T for every planned rack. Validate grounding, leak detection, and service clearances for door heat exchangers or CDU skids. Prestage spares for quick-connects, hoses, and drip trays. Baseline hot aisle temperatures at full load with a thermal camera during pilot burn-in. Review fire suppression compatibility with liquid systems and update emergency procedures where technicians may disconnect live cooling loops. A single missed facilities detail can erase any performance gains you hoped to show in your first executive demo.

Software patterns that make Blackwell shine

Modernize your comms stack first. Make sure NCCL and collective libraries are current and tuned for your link topology. Adopt pipeline and tensor parallelism patterns that keep all GPUs busy within each NVLink domain. Tighten your retrieval and storage IOPS budgets so the GPUs are not waiting on external services. For inference, prefer batching schedulers that group similar requests, and push stateless middle tiers so you can scale horizontally without sticky sessions. These are the quiet wins that often matter more than a new optimizer.

Security and governance that will pass procurement

Bigger clusters magnify risk. Keep model weights, adapters, prompts, and routing logic in version control with signed releases and a rollback plan. Enforce least privilege on both the control plane and the data plane. Log prompts, tool calls, and decisions in a format your compliance team can export without vendor tickets. If you operate across rooms or regions, document how Spectrum-XGS or your chosen fabric segments tenants and preserves audit trails end to end. Buyers now ask these questions up front because the clusters are too valuable to treat security as an afterthought.

Planning for the next jump

Roadmaps already point to co-packaged optics and higher radix switches as early as 2026, which will change rack layouts and cable plants again. If you are making big construction moves in 2025, reserve space, power, and tray capacity for that evolution. Keep logical isolation in software so you can migrate racks without painful cutovers. The goal is flexibility. Blackwell is a major step, but the network layer is about to change just as much as the compute layer.

The bottom line for technical solution seekers

Blackwell’s ramp is real and it is already reshaping project plans. Treat it as a rack-scale building block with liquid cooling and advanced fabrics, not as a drop-in card. Use Hopper to keep shipping, slot Blackwell where the performance leap outweighs facilities work, and design your network for both scale out and scale across. Secure packaging slots early, validate your software stack on small slices, and expand with discipline. Teams that do this will land measurable wins in 2025 while competitors wait for the perfect room that never arrives.

Ready to build your next digital product?

Talk to Zygobit about web apps, mobile apps, AI solutions, automation, and scalable software development tailored to your business goals.